Abstract:

Traditional project plans assume you know the requirements upfront. AI projects do not work that way, they are discovery‑driven.

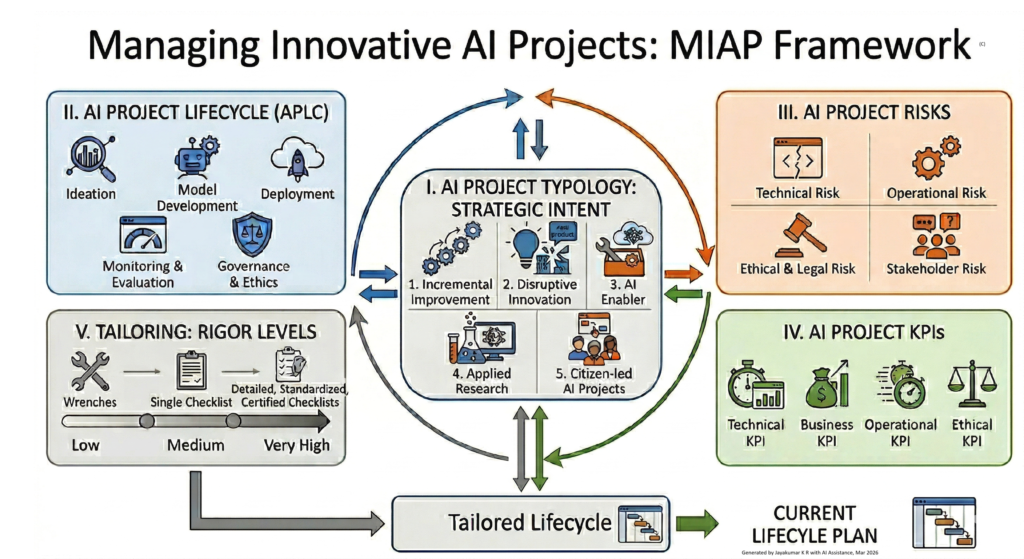

This article introduces the Managing Innovative AI Projects (MIAP) framework, built on five interconnected pillars: (1) project type (Incremental, Disruptive, etc.), (2) adaptive AI lifecycle, (3) AI‑specific risks, (4) multi‑dimensional KPIs, and (5) adjustable rigor levels.

Unlike rigid checklists, MIAP embraces iterations of technical, data, value, measurement, and risk discovery all feed back into the plan. The result is a tailored lifecycle that balances flexibility with accountability. A must‑read for anyone moving beyond AI hype into disciplined delivery.

In our first two issues, we laid the foundation: the AI Project Typology gave us a language to classify the kind of innovation we are undertaking—Incremental, Disruptive, Applied Research, AI Enabler, or Citizen‑Led. In our second issue, we brought those categories to life with real‑world examples. But classification alone is not delivery.

To truly master the discipline of delivering AI value, we need more than a taxonomy. We need an integrated way of thinking that connects what we are building to how we build it adaptively, responsibly, and with measurable outcomes. That is the purpose of the Managing Innovative AI Projects (MIAP) framework.

This framework, developed in my book co‑authored with Prof. Alain Abran, is not a rigid checklist. It is a structured yet flexible approach that recognizes the iterative, uncertain nature of AI initiatives. It consists of five interconnected elements that work together to guide a project from idea to impact.

The Five Pillars of the Framework

1. Identify the Project Type – Using the AI Project Typology, the team must first determine whether the initiative is an incremental enhancement, a market‑creating disruption, a foundational research effort, a tool for other builders, or a citizen‑led solution. This first step is critical because each type carries different assumptions, risks, and success criteria.

2. Navigate the AI Project Lifecycle (APLC) – AI development is not linear. The APLC defines five phases—Ideation & Feasibility, Data Acquisition & Preparation, Model Design & Validation, Deployment & Monitoring, and Operationalization & Governance. Unlike traditional lifecycles, these phases are revisited as the team learns from data and stakeholders.

3. Manage AI‑Specific Risks – AI projects face unique challenges: data drift, model bias, regulatory uncertainty, and ethical dilemmas. Our risk framework, grounded in ISO 31000 and the NIST AI RMF, categorises risks into Technical, Operational, Ethical & Legal, and Stakeholder domains. Proactive risk management is embedded throughout the lifecycle, not tacked on at the end.

4. Measure with AI‑Focused KPIs – Traditional project metrics (e.g., schedule variance) are insufficient. There is a need KPIs that span four dimensions: Technical (accuracy, latency), Business (ROI, adoption), Operational (deployment frequency, cloud cost efficiency), and Ethical (fairness scores, audit pass rates). These KPIs are not static; they evolve with the project.

5. Tailor the Lifecycle with Rigor Levels – A one‑size‑fits‑all lifecycle guarantees failure. Using a concept of project rigor—from Low to Very High—the team should adjust the depth and intensity of activities across each APLC phase. A citizen‑led prototype may use low‑rigor, pre‑built tools; a medical diagnostic system demands very‑high‑rigor validation and human‑in‑the‑loop oversight.

The Framework in Action: An Iterative Journey

These five elements do not simply flow in a straight line. The framework is inherently iterative:

- The project type influences which phases of the APLC require more emphasis.

- Early risk assessments may force to revisit the lifecycle or even question the project type.

- KPIs help to decide when to tailor the rigor up or down as the team progressively learns.

Traditional projects are often requirements‑driven they start with a clear specification, build to it, and measure success by how closely you matched the plan. AI projects are fundamentally different. They are discovery‑driven—the path to value is not known in advance. A team might begin with a promising idea, but the actual outcome emerges only after experimenting with data, testing models, and learning what is technically feasible, ethically acceptable, and truly valuable to users.

This discovery happens across several dimensions:

- Technical discovery: Will the model achieve the needed accuracy? Does it generalise to real‑world data? Which architecture performs best under our constraints?

- Data discovery: Is the available data sufficient, clean, and unbiased? Is there any need to collect more or different data? What hidden patterns or gaps emerge as we explore?

- Value discovery: Does the solution actually solve a real problem? Will users adopt it? What business outcomes can it drive?

- Measurement discovery: What KPIs truly reflect success? How to measure fairness, robustness, or user satisfaction in a way that is both accurate and actionable? Metrics themselves often need to be iterated as the project progresses as matters to stakeholders.

- Risk discovery: What new risks appear once the system interacts with the world? How do bias, drift, or regulatory requirements evolve over time?

Because discovery is iterative, the project’s lifecycle, risks, and even its success metrics must adapt as the team learns. Governance cannot be a fixed plan; it must be a responsive framework that allows for course correction while maintaining accountability.

This is why the five pillars of MIAP framework are connected by two‑way arrows. The project type sets initial assumptions, but as the team navigate the lifecycle, surface risks, and measure progress, there may be needs to revisit earlier decisions, adjusting the level of rigor, re‑evaluating KPIs, or even reclassifying the project type. Discovery‑driven management is not chaos; it is structured flexibility.

This interplay is what makes the framework powerful. The final output is a Tailored Lifecycle that is ready for execution, but always subject to re‑evaluation as the project evolves. It acknowledges that AI projects are discovery‑driven, and that governance must be adaptive.

Key Takeaways

- AI Projects Are Discovery‑Driven, Not Requirements‑Driven – Unlike traditional projects that start with fixed specifications, AI initiatives reveal their true value only through iterative learning across technical, data, value, measurement, and risk dimensions.

- Five Interconnected Pillars Form the MIAP Framework namely, Project type identification, adaptive AI lifecycle (APLC), AI‑specific risk management, multi‑dimensional KPIs, and adjustable rigor levels work together, not in sequence.

- One Lifecycle Does Not Fit All – A citizen‑led prototype requires low rigor and pre‑built tools; a medical diagnostic system demands very‑high rigor with human‑in‑the‑loop validation. Rigor levels tailor the depth of each APLC phase.

- KPIs Must Span Four Dimensions – Technical (accuracy, latency), Business (ROI, adoption), Operational (deployment frequency, cloud cost), and Ethical (fairness scores, audit pass rates). These metrics evolve as the project learns.

- Governance Must Be Responsive, Not Fixed – Risks, data drift, and regulatory requirements change over time. The MIAP framework uses two‑way arrows between pillars, allowing course correction while maintaining accountability.

What’s Next

Over the coming issues, we will explore each element in depth. We will walk through the APLC phases, unpack the risk taxonomy, show how to select the right KPIs for your project type, and provide practical guidance on tailoring the lifecycle with rigor levels.

The journey to mastering AI project delivery starts with knowing your project type and continues with a framework that guides you every step of the way.