Welcome to the second instalment of our AI Toolscape series. In our first part titled, ‘Navigating the AI Tools Landscape: A Map for Successful Delivery’, in the previous issue of AI Project Pulse, we introduced five categories of specialized tools that enable successful AI project delivery. But before we dive deeper into any single category, it is worth stepping back to clarify a foundational point: what exactly do we mean by “AI Toolscape,” and how is it different from the typical development tools you already know?

The tools in our AI Toolscape exist to answer questions that traditional tools never needed to ask:

- “Is our model fair across all demographic groups?”

- “Has data drift compromised our predictions since last month?”

- “Can we prove to regulators that this AI system was developed responsibly?”

- “How do we detect and respond when an AI agent behaves unexpectedly?”

These are not the tools you use to build AI systems, not your programming languages (Python, R), not your development frameworks (TensorFlow, PyTorch), not your foundation models (GPT, Llama, Claude), and not your IDEs or version control systems. The AI Toolscape we discuss operates at a different layer. It governs, monitors, evaluates, and orchestrates the systems you build with those foundational tools.

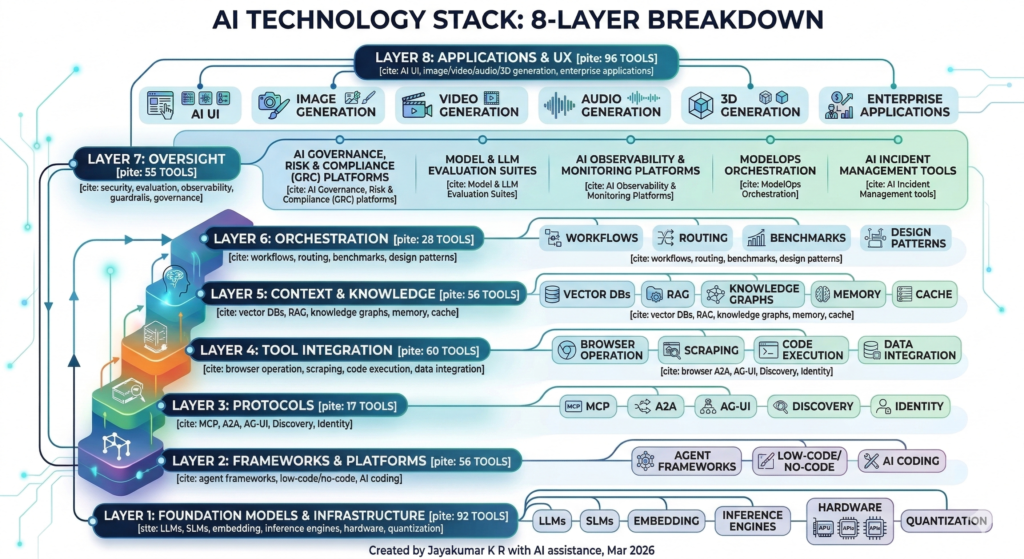

A comprehensive mapping published by Zenn, a Japanese technical publication, on February 17, 2026, surveyed the AI technology stack and identified 460 tools across eight distinct layers and fifty-three subcategories . The analysis reveals a crucial insight: while Layer 1 (Foundation Models & Infrastructure) contains ninety-two tools—including LLMs, SLMs, embedding models, and inference engines—building actual products requires tools and infrastructure from the remaining seven layers . The Applications & UX layer alone already hosts ninety-six tools. The number across layers grows by the day as the explosion of AI development continues to bombard the market with new offerings.

At AI Toolscape, we are not attempting to cover this entire sprawling ecosystem. Instead, we are taking a cross-section view aligned with the scope we defined for ourselves in Issue #1, focusing on the five categories of specialized tools essential for successful AI project delivery. These correspond most directly to Layer 7 (Oversight) supporting the Application & UX, the 8th layer in the Zenn framework. By concentrating on this critical layer and its intersections with adjacent categories, our series will provide practical guidance on the tools that transforms experimental code into trustworthy, scalable business assets.

AI Toolscape Categories/

- AI Governance, Risk & Compliance (GRC) platforms establish the ethical and regulatory foundation from the moment an idea is first conceived. Tools like Credo AI, IBM Watsonx.governance, and Monitaur help organizations codify and automate risk rating processes, transforming into a one-day automated workflow . They ensure that every subsequent decision about data, models, and deployment remains aligned with organizational policies and external regulations from the earliest feasibility discussions through to long-term operations.

- Model & LLM Evaluation Suites come into their own during the intensive period of model development and validation. Before any AI system reaches users, tools such as Microsoft Fairlearn, IBM AIF360, Weights & Biases, TruEra, and RAGAS rigorously test for bias, robustness, explainability, and performance against quality thresholds . They answer the critical question: “Is this model ready for release?”

- AI Observability & Monitoring Platforms take over once systems are deployed. Fiddler, Arize AI, WhyLabs, Arthur AI, and Evidently AI provide continuous visibility into model health, detecting data drift, performance degradation, and anomalies the moment they occur .

- ModelOps Orchestration automates the entire pipeline from experimentation to deployment. MLflow, Domino Data Lab, Kubeflow, and cloud-native solutions like Amazon SageMaker MLOps and Azure Machine Learning ensure that moving models from notebooks to production is reliable, repeatable, and governed—eliminating the “handoff gaps” that so often derail AI initiatives .

- AI Incident Management tools operationalize how teams respond when things go wrong. JIRA Service Management, Splunk ITSI, and PagerDuty—when integrated with observability data—connect detection to resolution, enabling rapid response and continuous learning from failures.

The true power of understanding the AI Toolscape lies in seeing how each category aligns with the distinct phases of an AI project’s journey, from initial concept through to ongoing operation in the real world. In my book co-authored with Prof. Alain Abran, ‘Managing Innovative AI Projects’, these phases are explored in depth. This series will dwell in detail on adopting AI Toolscape in specific AI Development Life Cycle phases in subsequent issues.

What’s Next in This Series: Beyond a Buyer’s Guide

With this abundance comes the risk of “tool fatigue”: teams running 3-5 overlapping subscriptions, using each at a fraction of its capacity, and spending more time evaluating than building . This series aims to fix that by giving you a clear mental model and practical decision frameworks.

In the coming issues, we will explore each tool category in depth. But this series will be more than a simple buyer’s guide or feature checklist. My goal is to provide an implementation primer, practical guidance that helps you move from tool selection to successful deployment within your unique AI project lifecycle.

For each category, we will examine:

The Problem It Solves: We will revisit the “why” from Issue #1, the dynamic nature of AI systems, their unprecedented scale, and the novel risks they introduce. Understanding the problem deeply is the first step to recognizing which tools genuinely address your pain points versus those that create new ones .

Core Capabilities: What should you actually look for? For observability platforms, this means data drift detection, performance monitoring, and explainability integrations. For evaluation suites, it means fairness metrics, robustness testing, and LLM-specific capabilities like hallucination detection and RAG accuracy assessment. We will cut through the marketing noise to identify what matters .

Integration Points: Tools do not operate in isolation. We will show through simple diagrams and clear explanations—how each category fits into a coherent ModelOps pipeline. Does it connect to your existing data sources? Can it send alerts to your incident management system? Does it support your cloud provider and deployment architecture? These integration questions often determine whether a tool delivers value or becomes shelfware .

Selection Criteria: When faced with choices such as Fiddler versus Arize versus WhyLabs, or MLflow versus Kubeflow versus cloud-native options, what questions should your team ask? We will develop practical frameworks tailored to different contexts: “Does it integrate with our cloud provider?” “What’s its support for LLMs versus tabular models?” “How does its pricing scale with our usage?” . The goal is not to declare a single “best” tool, but to equip you with the right questions for your specific situation.

Key Takeaways

- The AI Toolscape Is Distinct from Development Tools: The tools in our AI Toolscape such as governance platforms, evaluation suites, observability systems, ModelOps orchestrators, and incident management tools operate at a different layer than the programming languages and frameworks. They exist to answer questions traditional tools never needed to ask: Is our model fair? Has data drifted? Can we prove compliance?

- Focus on Five Categories, Not the Entire Ecosystem: With over 460 tools across eight layers of the AI stack, the landscape is overwhelming. Our series deliberately concentrates on five categories essential for successful AI project delivery. This cross-section view, aligned with the oversight layer of the broader ecosystem, provides practical guidance without drowning in noise.

- This Series Will Be an Implementation Primer, Not a Buyer’s Guide: Beyond feature checklists, upcoming issues will equip you with insights required for identifying core capabilities that matter, and asking the right selection questions for your specific context. The goal is to help you move from tool evaluation to successful deployment.